automation to: Search for text in a file or across multiple files. It can also change letter case, convert typography quotes, delete. It can remove unnecessary spaces and unwanted characters.

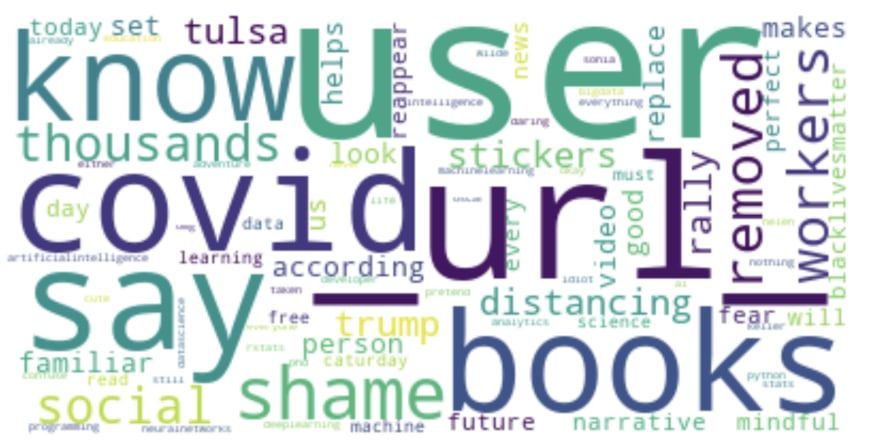

With this, you can also create your very own find and replace text online list. text cleaning in NLP. Text Cleaner or Clean Text is an all-in-one online text cleaning and text formatting tool that can perform many complex text operations. For example all the dates will be replaced with DATE, the prices etc will be replaced with MONEY and so on. data cleanup Explore and analyze your datasets with new Python libraries and. In the first part of the series, we saw some most common techniques which we daily use while cleaning the data i.e. By using modules or packages available ( htmlparser of python) We will be using. You can do this in two ways: By using specific regular expressions or. We need to get rid of these from our data. It is better to replace the original text with its corresponding entities. 1) Clear out HTML characters: A Lot of HTML entities like ' ,& ,< etc can be found in most of the data available on the web. Imagine that you try to model a list of documents using TF-IDF on n-grams. A common task is to detect the Name Entities and sometimes it makes sense to replace the original text with the corresponding entities. Enter them in comma-separated format (in other words, separate each word with comma and a space, in that order).When we work on NLP projects, we need to do text mining and data cleansing. all the steps required to clean up the texts and prepare them to be. Create topics and classifying spanish documents using Gensim and Spacy. Enter the stop words you want to remove in the text field. However, NLTK and spaCy are the most popular Natural Language Processing tools. with a total of 570GB of clean and deduplicated text processed for this work.

#Spacy clean text how to#

You can also remove stop words that aren't removed by default. Text Data Cleaning In Python How to clean text data in pythonTextCleaningPython TextCleaningNLP UnfoldDataScienceHello,This is Aman and I am a Data Scie.

Leverages spaCys pipe for faster batch processing.

#Spacy clean text full#

You can see the full list of stop words for each language in the spaCy GitHub repo: Runs a spaCy pipeline and removes unwantes parts from a list of text. The Text Pre-processing tool uses the package spaCy as the default. spaCy has different lists of stop words for different languages. Lemmatization: we can use spaCy package to achieve the root forms (sometimes called. To remove stop words, check the box for Stop Words. One of the first steps of working with text data is cleaning it. Some punctuation tokens-such as the period in "Mrs."-are kept because they are meaningful. You might want to select this option because punctuation can confuse some NLP algorithms. This option removes punctuation from the data. To remove punctuation, check the box for Punctuation. You might want to select this option because numbers can confuse some Natural Language Processing algorithms. This option removes certain digit tokens (in other words, numbers) from the data. Removing less informative words like stopwords, punctuation etc. I am trying to wrap my head around how to do proper text pre-processing (cleaning the.1 answer Top answer: What you are doing seems fine in terms of preprocessing. To remove digits, check the box for Digits. I am fairly new to machine learning and NLP in general. That way, when you apply a machine-learning algorithm to analyze the words, the machine is able to recognize that all those words should be grouped together. For example, the words "running," "ran," and "runs" all become the word "run" after you lemmatize them. This option transforms derivative words into their root words. To convert words to their roots, check the box for Convert to Word Root (Lemmatize). It can clean text, perform lemmatization, extract entities (such as people or places). The Text Pre-processing tool has some advanced options Text Normalization spaCy is an NLP package in Python with a wide range of capabilities.